Hello Community! This week is our quarterly CA Agile Central Hackathon, and I've written an app that I think you're going to love. Have you had Teams that:

- Have trouble consistently and predictably delivering work?

- Need actionable data to guide them towards their next performance improvement?

- Spend too much time estimating as they can't agree what estimates should be?

- Are experimenting with Scrumban and need a framework for tracking Team performance metrics?

If so, you should try out my Estimation Calibration app which guides you through analyzing your Team's cycle times by plan estimate to achieve more consistent and predictable estimation. Below is an overview, and instructions for using it. If you try it, I'd love to hear your feedback by the end of the week (Friday January 5) to make the app even better and potentially include in my Hackathon demo video. I'm passionate about using data to guide Teams to their highest performance yet, and I hope you are too!

App Overview

The app starts by showing your Team's cycle times by estimate for a given Release:

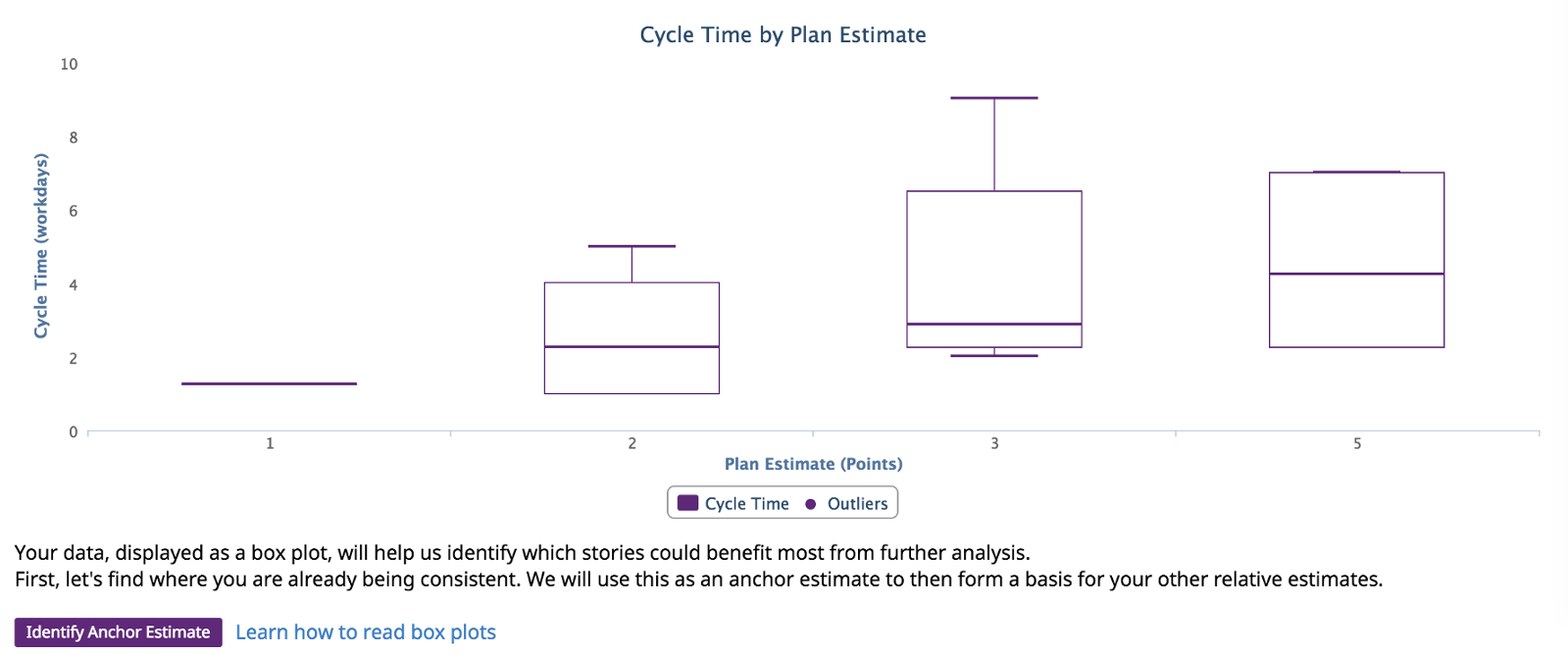

Although this graph is valuable as-is for a quick check of consistent delivery, it's only the start of the app's flow to guide you through analysis. Clicking "Calibrate Estimates" shows the data in box plots to highlight the cycle time distribution by plan estimate:

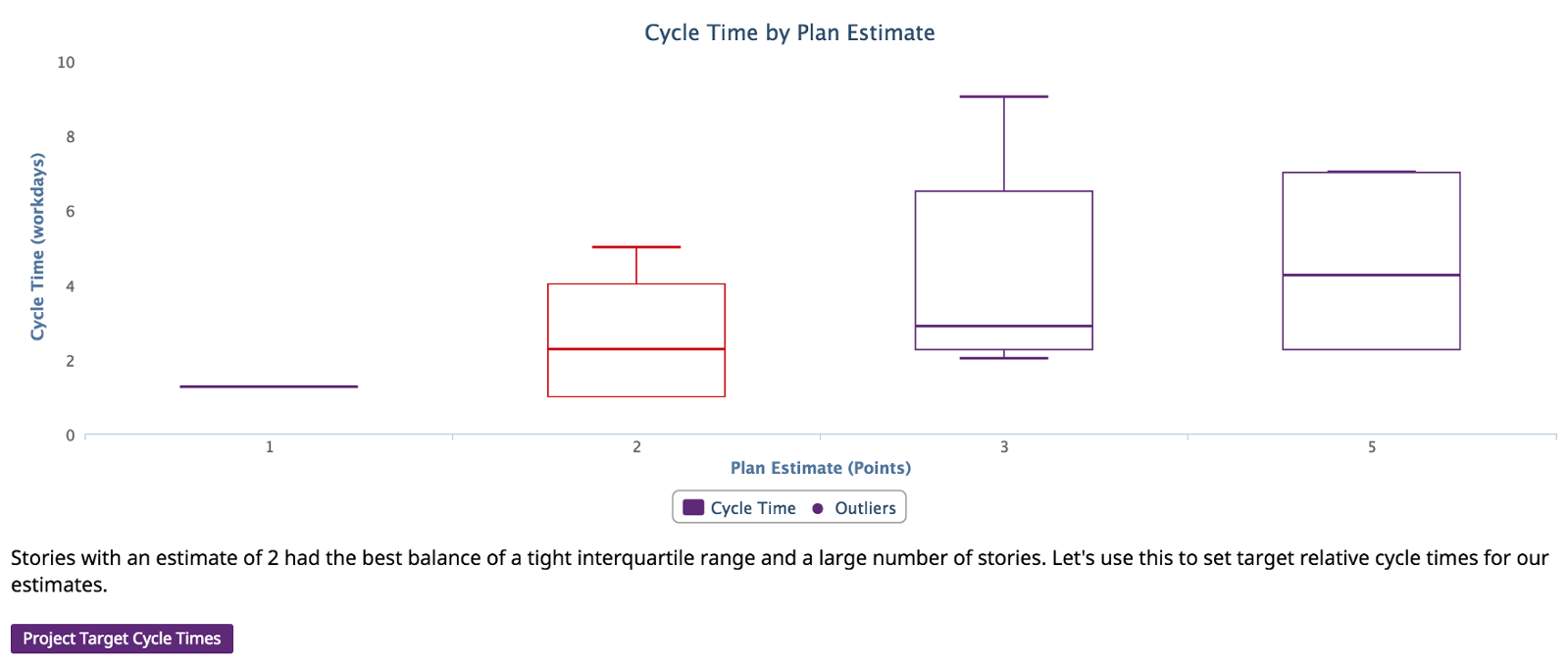

Ideally we'd see tight box plots that shift upwards as the estimates increase. That's rarely the case for a Team, so the app's next step is identifying which estimate is your Team's best at being consistent already. By starting with what your Team is already good at, the app helps transition to what could be improved:

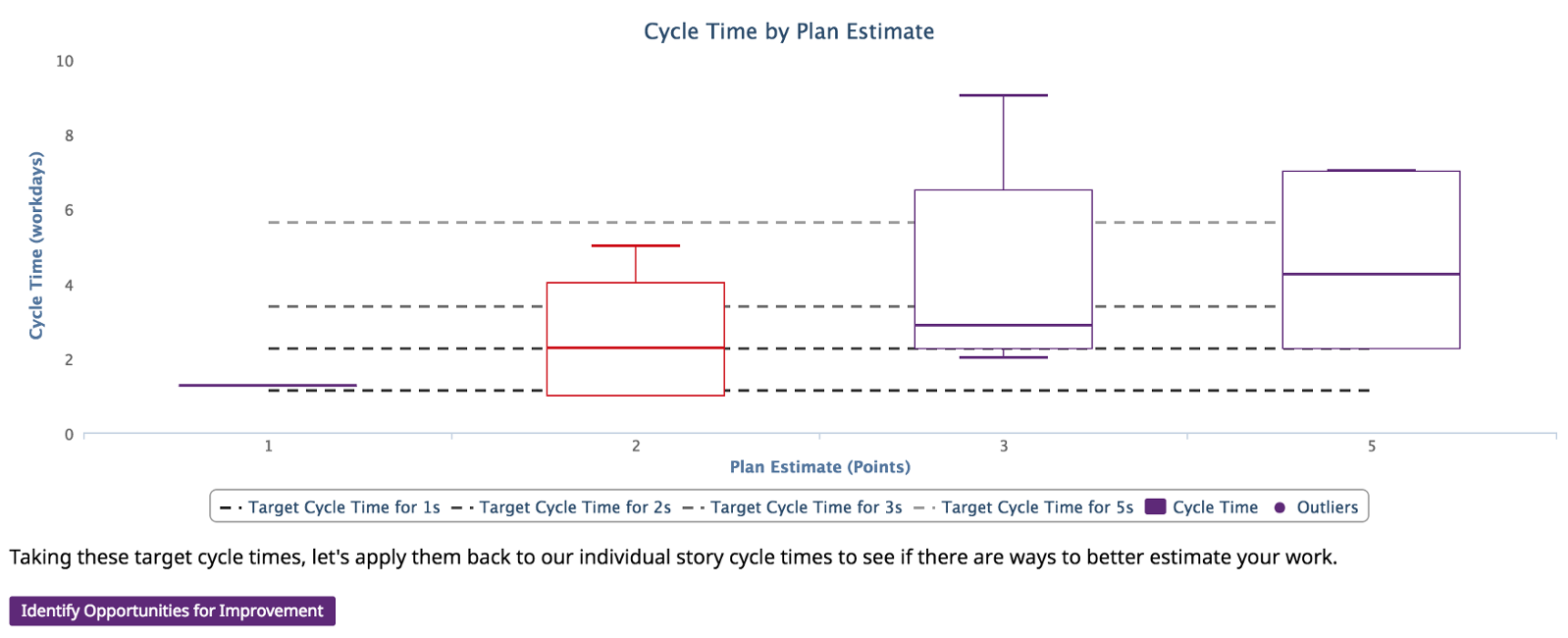

Using this anchor, the app then defines ideal cycle times for each estimate. It scales the median cycle time for your anchor by the difference in plan estimate for your other stories to create relative ideal cycle times for each estimate. You can already start to see which types of stories need the most calibration:

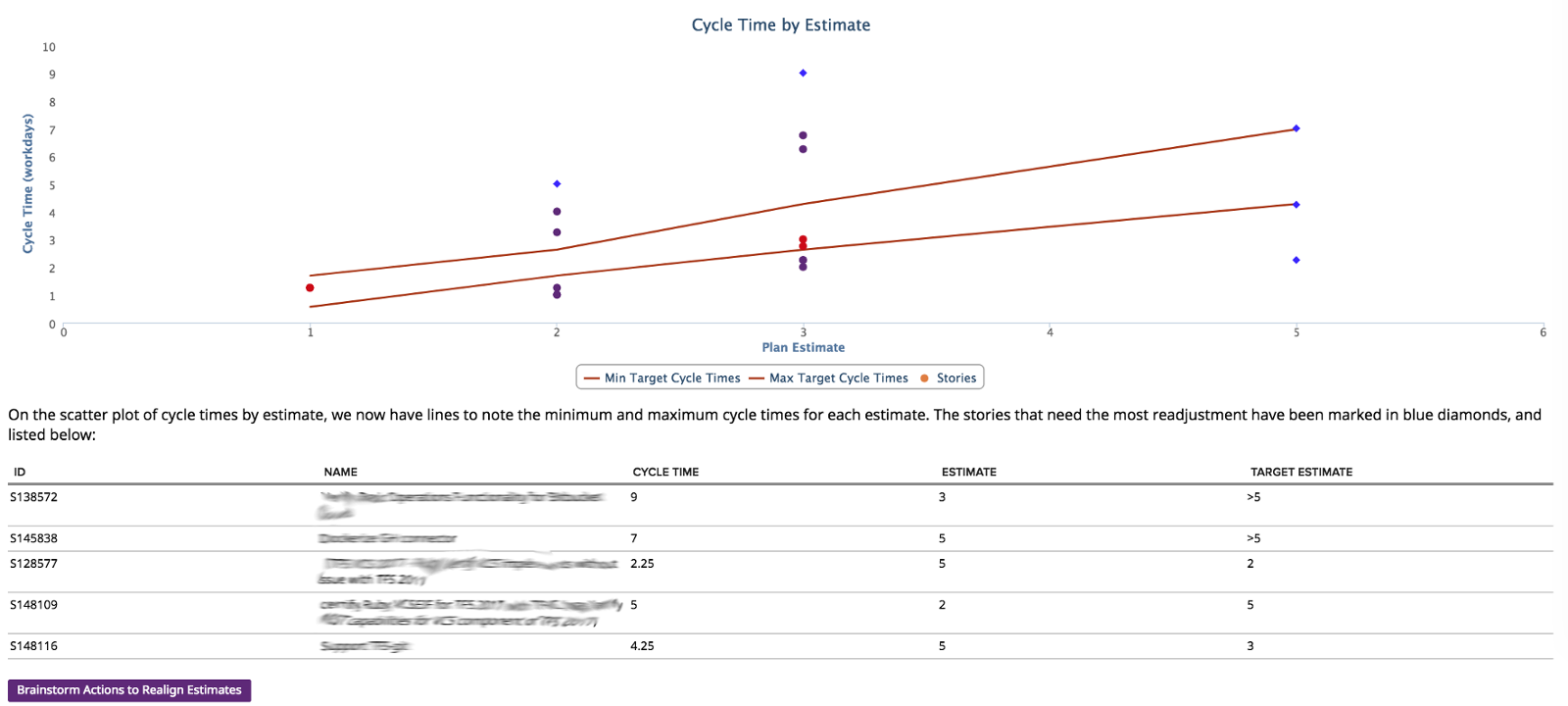

Using these bands for ideal cycle times per estimate, the app applies it back to the scatter plot to show which stories were in the ideal band and which were out. Since we want our Agile Teams to make incremental improvements, the app identifies the top five stories that could use the most adjustment, rather than have the Team focus on every problem:

Finally, the app guides your Team into a retrospective to identify actions that could be used in the future to make better estimate choices next time for more consistent cycle times:

Installation Instructions

If you've never used a custom app in Agile Central, you're missing out! Check out Extend CA Agile Central With Apps | CA Agile Central Help for instructions. Specifically, you'll be using a Custom HTML app to copy my code into Agile Central Custom HTML | CA Agile Central Help. If you need any help while installing the app, just let me know!

My app can be found at https://raw.githubusercontent.com/wkammersell-ca/estimation-calibration/master/deploy/App-uncompressed.html As this is built during a Hackathon, it is unsupported by CA. The app only reads your data, and doesn't try to update/write to your Agile Central data.

Again, any feedback or comments are greatly appreciated, and have an awesome start to 2018!