**** jOAKO, clarifying here. That max size column represents the max value that the queue was up to. So at one point it had 1.4M things in the queue. Woah.

Also when you find yourself in this situation, shut down your connectors and the other SOI services on the UI box. Let the manager just run and process all these items w/o the connectors sending in more data info for it to process.

Do you have actions associated with specific alerts that maybe the SOI MGR is waiting to complete before it moves along? If so then possibly check what its doing and tweak accordingly.

If you stop your SOI manager then check your:

...\CA\SOI\tomcat\webapps\activemq-web\activemq-data

folder. If its filled with stuff delete it all then restart just the CA UCF Broker and the CA SAM Application Server. Let it run till the MGR Debug Queue Monitor page above settles down.

Also run the "Database Tables" and "Show Report" util. Check your Alert History. If its very large then you should run your DB Maintenance commands to purge old alert history.

Open command prompt and CD to:

C:\Program Files (x86)\CA\SOI\Tools>

soitoolbox -x --purgeClearedAlerts 90

soitoolbox -x --cleanHistoryData 90

soitoolbox -x --purgeDBInconsistencies -b 300 -t 1200

soitoolbox -x --rebuildIndexes -b600 -t600

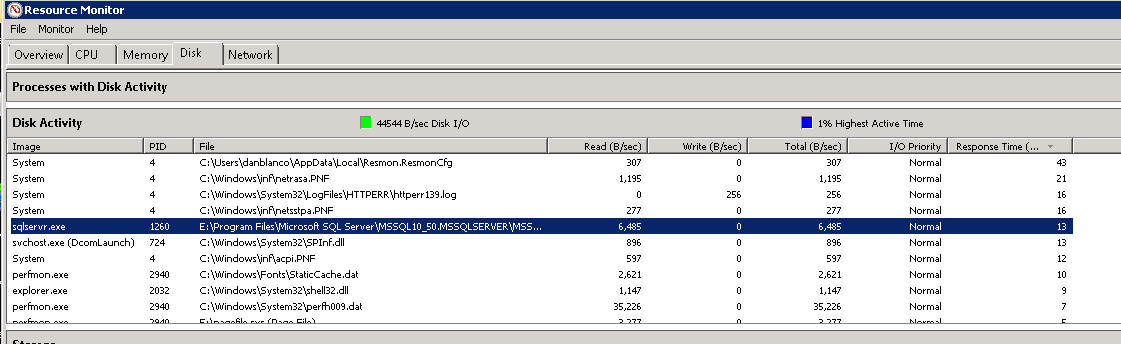

Also if your SOI MGR is taking that long then another thing to check is your DB and your SOI MGR box's Disk Activity Response Time:

If the #'s are high then that's the bottle neck. Check the over system running your SOI deployment to see if its maxed out in I/O's.