One of the things that I routinely talk to customers about is the dichotomy of life in the current state of IT. It starts with the summation:

“The very cool thing going on is that the rate of change in IT has never been faster than it is today. The very scary thing going on is that the rate of change in IT has never been faster than it is today!”

Blog link to source: https://streamsets.com/blog/data-motion-evolution/

This is actually a bigger issue than many people realize at first. And it has multiple components to it. The technologies themselves are changing at a pace that we have never seen before (cycle times for CPU design are shorter than ever for example or that with SSD storage we are looking at 18-24 month iterations of technology). In addition to which the life cycle of applications, and in particular the first portion of the life cycle which includes the development and deployment of new applications, is running at an absolutely frantic pace. The vast majority of applications are no longer expected to have 5+ year life cycles. And new applications are being developed at even faster rates than we have ever seen. From recognition of a gap in an interface/offering to the development and deployment of an applet or full application to fill the gap is almost the blink of an eye. Add to that the fact that the sheer volume of data, which includes the crazy volume of things which are generating the data, is astronomical. Just a few years ago the concept that the world would be generating many Zettabytes of data in a single year was laughed at. And yet by 2020 predictions are that we will accumulate 44 Zettabytes of data.

Okay you’re thinking. But this is just the situation we live with. But stop and consider a moment. It’s not just the data that your business and applications are generating. There is also data ABOUT the data that you are generating. Meta data, as it’s called, can be metrics that you generate about the type of data/use of data/trend of data in your applications but it can also deal with the metrics by which your data environment runs such as Transactions Per Second or TPS for example. Add to this the monitoring of the environment that supports your data and then throw in the fact that data generation, access and transfer is happening at lower latencies than ever before. And just to make it more interesting, lets add the stress that the infrastructure is meant to be both invisible and unbreakable. Neither the consumer nor the application owner have any interest or sympathy in how the infrastructure does what it does. They only care that the application is super-fast and never stops. And with Fibre Channel SAN implementations the application base being hosted or supported is frequently the most mission critical environment that the company has. It’s not just inconvenient for an application outage to occur, but rather it can be a career or company damaging event. So at such speed and with no forgiveness how is any human administrator supposed to keep pace with the environment?

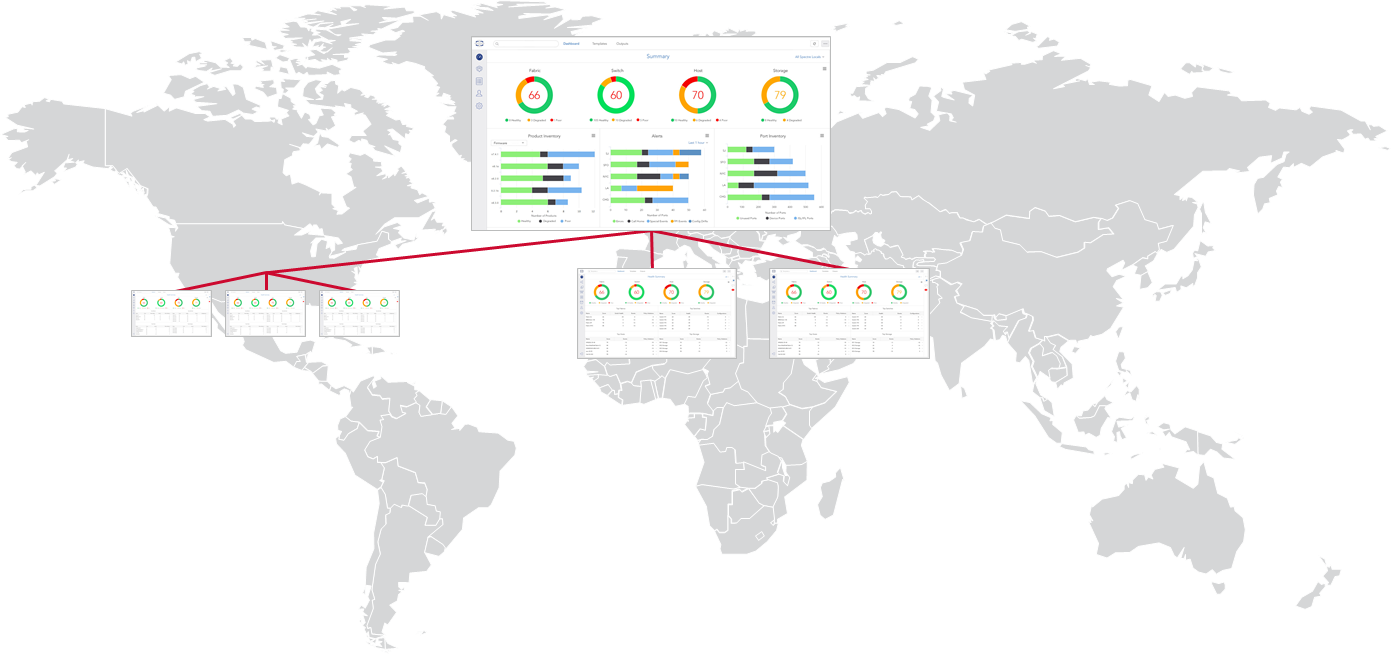

It is in this environment that we are launching the Brocade SANnav management platforms. To address these complexities, we offer both Brocade SANnav Management Portal for direct management of SAN fabrics and Brocade SANnav Global View for customers who want to be able to see multiple site locations/fabrics from a single management view. And you might be thinking that there are other tools available in the market whose description sounds similar, so why Brocade SANnav? And that’s a fair question, so let’s take a deeper look.

First of all, one of the things that is true about the Brocade SAN hardware platforms is that there is an incredible amount of telemetry gathering and monitoring that goes on in silicon. We actually measure every frame on every port of every switch. Depending upon your management choices we are measuring latency on every port in the fabric every 2.5 microseconds. And in a world where NVMe devices can have a latency in the sub 20 microsecond range and array controllers are coming down under 100 microseconds that kind of telemetry can be critical. But when so much meta data and performance measurements are being done, how does the administrator decide what to pay attention to? That’s one of the advantages of SANnav technology.

- Using easy to understand topology environments that show you device or fabric health so you are quickly made aware of interest points.

- The ability for machine learning to take the reams of measurements and give you actionable insights to the environment.

What points do you need to focus on along with recommendations on what to do about the situation?

- Streamlining common processes around deployment so that you shorten the time the human has to be involved (humans being the scarcest resource in the environment).

- Automate both data collection and reporting into views that are custom to your team or environment.

- Check for “drift” away from the known gold configuration of network elements and autocorrect to the proper settings.

- Using SANnav Global View to easily drill down from a corporate wide view to the exact location that needs your attention.

It’s an article of faith among SAN administrators that when application issues occur the most common place that people point first (and the least common location for the actual problem to be) is the SAN. These administrators actively look for things that will shorten the MTTI (Mean Time To Innocence) scenario. And in those occasions where the telemetry does point to the SAN it allows the administrators to drastically shorten the “incident window”.

The combination of Brocade SANnav Management Portal and Brocade SANnav Global View provide a solution set that is geared towards meeting the challenges of today’s IT organization. And it seems very clear that the human element isn’t going to be fast enough to cope with the environment without help. So do yourself a favor and have a look at the environment that is designed to save you time, money and headaches in your SAN management. Because tools of this type and capability are going to become the “necessary kit” that IT administrators will need to survive the next wave in IT.

#SANnav#san#fibrechannel#fc#BrocadeFibreChannelNetworkingCommunity