Hi guys,

I am going to try to provide as much info as I can, as well as what we have already tried thus far.

I have a Sandbox environment, version 13.1 sp8. The server specifications are as below:

App: Windows Server 2008 R2 Standard Virtual Machine SP1

64 Bit

RAM: 12GB (Allocated 4GB to the app in the properties.xml)

CPU0 Cores: 4 Processors 4

CPU1 Cores: 4 Processors 4

C: 40GB (10GB Free)

D: 40GB (26GB Free - Clarity is installed on D:)

DB: Windows Server 2008 R2 Standard Virtual Machine SP1

64 Bit

RAM: 32GB (In SQL Studio, I have set the minimum server memory to 10GB and the max server memory to 28GB)

CPU0 Cores: 4 Processors 4

CPU1 Cores: 4 Processors 4

C: 40GB (19GB Free)

D: 700GB (60GB Free - contains DB files and log file)

N: 2GB (1.77 Free - used for SSIS)

Usually our Sandbox is just used for testing fixes and small pieces of Development. But we recently upgraded our Development environment to 14.2. We cannot develop in 14.2 version and promote to Production, as Production is still 13.1. So we have been using our Sandbox for Development work.

It is true that more development work is taking place but to me it does look like it should be adequately resourced.

However the development team have reported the following:

Intermittently the system is responding normally, but most of the time we are seeing the below issues:

Jobs are taking longer than normal to run e.g. the 'Annuities Marketing - Disable Unrequired Notification for all users' job normally takes 3 seconds. It took 1 minute 56 seconds to run last night.

Database updates are taking longer than normal to run e.g. An update statement to a trigger normally takes 1 second. An update statement ran for 8 minutes yesterday before being cancelled

General navigation is very slow. e.g. logging in, navigating to projects, opening resources on the admin side, checking the jobs log or process engine

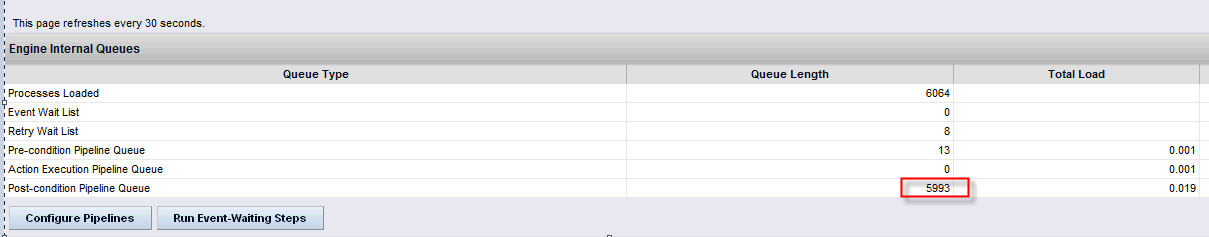

As of this morning the process engine has become stuck. No new processes are starting and the queue length is not reducing - (I have restarted bg service)

Steps I have taken so far:

Increase the memory allocation available to the app from 2.5GB to 4GB (<applicationServerInstance id="app" serviceName="Niku Server" rmiPort="23791" jvmParameters="-Xms4096m -Xmx4096m)

Increased the RAM on the DB server from 16GB to 32GB.

Also applied some of the tips suggested in the performance tuning webinar.

Is there anything else I can try?